Unleash your potential

It takes passion to make the extraordinary possible for patients. Fueled by collaboration, our culture of innovation enables us to explore new possibilities and bring powerful ideas to reality.

When you join our diverse, global team, you’ll harness the power of unparalleled data, advanced analytics, cutting-edge technologies, and deep healthcare and scientific expertise to drive healthcare forward.

See where your skills can take you.

Featured careers

Make an impact on patient health

Take clinical research to the next level. Intelligently connect data, in-house technologies, and analytics to enable evidenced-based solutions that will help reimagine clinical development and improve patient outcomes.

Learn More

Lead the future of healthcare technology

The healthcare world of tomorrow is being built by the technologists of today. Be at the forefront of building healthcare innovations by harnessing the power of transformative technologies, industry leading data and analytics, and advanced AI/ML capabilities.

View Jobs

Solve healthcare’s most complex challenges

Explore new paths to success in life sciences consulting. Whether it's optimizing clinical trial design, uncovering insights in real-world data, or developing market access strategies, collaborate to deliver innovative solutions to solve healthcare's most complex challenges.

Learn More

Explore the human side of data

Play a pivotal role in the evolution of healthcare. From conducting analyses to analyzing the data, use your passion and technical expertise to speed market access and help customers navigate the complexities of drug development.

View Jobs

See every challenge as an opportunity

Inspire your customers with your genuine passion for advancing healthcare. Share the unique chemistry that happens when you combine your scientific understanding with commercial strategy to benefit patients everywhere.

View Jobs

Put your learning into practice

Explore new possibilities while collaborating with colleagues who will help you achieve your goals, and experienced leaders who will support your career exploration and growth.

Learn More

For me, it’s the combination of collaborating with the smartest minds in healthcare and the passion we share for the work we do. Not only are we making a positive impact on patient lives, but we are learning from each other while doing it.

Abhinav Mathur

Senior Consultant

Advanced Analytics

At IQVIA, we have every growth opportunity at our fingertips — we can work with people across the world, enjoy a supportive culture, and at the end of the day, improve global healthcare.

Sue Bailey

Senior Director

Clinical Operations

Working in healthcare consulting is inspiring. I am glad to be part of a close community that brings together diverse skills and experiences and critical thinkers to help our customers advance human health.

Jose Gonzalez Martinez

Senior Principal

Consulting Services

From my amazing manager to collaborating with diverse teams, the people I work with ignite my passion for learning and empower my growth every day. I know my contributions are helping to enable medical breakthroughs that could advance healthcare and improve patient lives.

Detra Mason

Clinical Project Manager

Our company

Creating a healthier world is our purpose. Diverse expertise, innovation, and powerful capabilities is how we help get there.

Learn More

Culture

Our passion to make an impact is fueled by the people we work with, a curiosity to bring new ideas to life, and a focus on always doing better.

Learn More

Diversity & Inclusion

Experience a culture of inclusivity and belonging, where diverse thought and expertise sparks innovation.

Learn MoreJoin our Global Talent Network

Let’s stay connected. Sign up to receive alerts when new opportunities become available that match your career ambitions.

Explore life at IQVIA

-

Learn More July 19, 2023

Learn More July 19, 2023 -

Innovation in everything we do We’ve deployed innovative solutions to push boundaries, make better health possible, and drive continuous improvement. Learn More July 17, 2023

Innovation in everything we do We’ve deployed innovative solutions to push boundaries, make better health possible, and drive continuous improvement. Learn More July 17, 2023 -

Join IQVIA’s Global Talent Network Let’s stay connected. Join our global Talent Network to receive alerts when new opportunities become available that match your career ambitions. Learn More June 23, 2023

Join IQVIA’s Global Talent Network Let’s stay connected. Join our global Talent Network to receive alerts when new opportunities become available that match your career ambitions. Learn More June 23, 2023 -

Learn More October 04, 2023

Learn More October 04, 2023 -

We believe in giving back We support charitable organizations around the world. It’s just another way that we’re putting patients first. Learn More July 17, 2023

We believe in giving back We support charitable organizations around the world. It’s just another way that we’re putting patients first. Learn More July 17, 2023 -

Our partners We’ve built relationships with a network of partners to help drive success in our services. Learn More July 17, 2023

Our partners We’ve built relationships with a network of partners to help drive success in our services. Learn More July 17, 2023 -

Learn More October 05, 2023

Learn More October 05, 2023 -

Learn More October 09, 2023

Learn More October 09, 2023 -

Learn More October 09, 2023

Learn More October 09, 2023 -

Learn More October 11, 2023

Learn More October 11, 2023 -

Learn More October 04, 2023

Learn More October 04, 2023 -

Learn More January 03, 2024 Blog Related Content - Interns

Learn More January 03, 2024 Blog Related Content - Interns -

Learn More January 10, 2024

Learn More January 10, 2024 -

Learn More January 23, 2024

Learn More January 23, 2024 -

Disclaimer Disclaimer Learn More October 13, 2023

Disclaimer Disclaimer Learn More October 13, 2023 -

Q2 Solutions COVID-19 Vaccine Status The health and safety of our employees, customers, patients with whom we interact, and communities are essential to our commitment to helping our customers improve healthcare worldwide. Learn More October 19, 2023

Q2 Solutions COVID-19 Vaccine Status The health and safety of our employees, customers, patients with whom we interact, and communities are essential to our commitment to helping our customers improve healthcare worldwide. Learn More October 19, 2023 -

Equal Opportunity Employment at Clintec Clintec is committed to providing equal employment opportunities for all, including veterans and candidates with disabilities. Learn More October 24, 2023

Equal Opportunity Employment at Clintec Clintec is committed to providing equal employment opportunities for all, including veterans and candidates with disabilities. Learn More October 24, 2023 -

Clintec COVID-19 Vaccine Status The health and safety of our employees, customers, patients with whom we interact, and communities are essential to our commitment to helping our customers improve healthcare worldwide. Learn More October 24, 2023

Clintec COVID-19 Vaccine Status The health and safety of our employees, customers, patients with whom we interact, and communities are essential to our commitment to helping our customers improve healthcare worldwide. Learn More October 24, 2023 -

Clintec COVID-19 Vaccine Status The health and safety of our employees, customers, patients with whom we interact, and communities are essential to our commitment to helping our customers improve healthcare worldwide. Learn More October 24, 2023

Clintec COVID-19 Vaccine Status The health and safety of our employees, customers, patients with whom we interact, and communities are essential to our commitment to helping our customers improve healthcare worldwide. Learn More October 24, 2023 -

Veterans Employee Resource Group at IQVIA Veterans Employee Resource Group at IQVIA Learn More October 25, 2023

Veterans Employee Resource Group at IQVIA Veterans Employee Resource Group at IQVIA Learn More October 25, 2023 -

Learn More July 11, 2023

Learn More July 11, 2023 -

Disabilities and Carers Network Employee Resource Group at IQVIA Disabilities and Carers Network Employee Resource Group at IQVIA Learn More October 13, 2023

Disabilities and Carers Network Employee Resource Group at IQVIA Disabilities and Carers Network Employee Resource Group at IQVIA Learn More October 13, 2023 -

Emerging Professionals Network Employee Resource Group at IQVIA Emerging Professionals Network Employee Resource Group at IQVIA Learn More October 13, 2023

Emerging Professionals Network Employee Resource Group at IQVIA Emerging Professionals Network Employee Resource Group at IQVIA Learn More October 13, 2023 -

Equal Opportunity Employment at IQVIA At IQVIA, all qualified applicants will receive consideration for employment without regard to race, color, religion, sex, sexual orientation, gender identity, national origin, disability, status as a protected veteran, or any other status protected by applicable law. Learn More October 13, 2023

Equal Opportunity Employment at IQVIA At IQVIA, all qualified applicants will receive consideration for employment without regard to race, color, religion, sex, sexual orientation, gender identity, national origin, disability, status as a protected veteran, or any other status protected by applicable law. Learn More October 13, 2023 -

Impressum Impressum (German) Learn More October 17, 2023

Impressum Impressum (German) Learn More October 17, 2023 -

Multi-Faith Network Employee Resource Group at IQVIA Multi-Faith Network Employee Resource Group at IQVIA Learn More October 17, 2023

Multi-Faith Network Employee Resource Group at IQVIA Multi-Faith Network Employee Resource Group at IQVIA Learn More October 17, 2023 -

PRIDE Employee Resource Group at IQVIA PRIDE Employee Resource Group at IQVIA Learn More October 19, 2023

PRIDE Employee Resource Group at IQVIA PRIDE Employee Resource Group at IQVIA Learn More October 19, 2023 -

Equal Opportunity Employment at Q2 Solutions Q2 Solutions is committed to providing equal employment opportunities for all, including veterans and candidates with disabilities. Learn More October 24, 2023

Equal Opportunity Employment at Q2 Solutions Q2 Solutions is committed to providing equal employment opportunities for all, including veterans and candidates with disabilities. Learn More October 24, 2023 -

Black Leadership Network Employee Resource Group at IQVIA Black Leadership Network Employee Resource Group at IQVIA Learn More October 24, 2023

Black Leadership Network Employee Resource Group at IQVIA Black Leadership Network Employee Resource Group at IQVIA Learn More October 24, 2023 -

Women Inspired Network Employee Resource Group at IQVIA Women Inspired Network Employee Resource Group at IQVIA Learn More October 24, 2023

Women Inspired Network Employee Resource Group at IQVIA Women Inspired Network Employee Resource Group at IQVIA Learn More October 24, 2023 -

RRace, Ethnicity And Cultural Heritage Employee Resource Group at IQVIA Race, Ethnicity And Cultural Heritage Employee Resource Group at IQVIA Learn More October 25, 2023

RRace, Ethnicity And Cultural Heritage Employee Resource Group at IQVIA Race, Ethnicity And Cultural Heritage Employee Resource Group at IQVIA Learn More October 25, 2023 -

Technology and Analytics Careers with IQVIA India Technology and Analytics Careers with IQVIA India Learn More October 25, 2023

Technology and Analytics Careers with IQVIA India Technology and Analytics Careers with IQVIA India Learn More October 25, 2023 -

Advance your CRA career in Australia & New Zealand Watch these videos to learn how the CRA development program in Australia and New Zealand helped both Michelle and Barbara advance their careers. Learn More October 06, 2023 5 MINUTE READ Video Careers Related Content Blog Related Content - CRA Blog Related Content - Careers

Advance your CRA career in Australia & New Zealand Watch these videos to learn how the CRA development program in Australia and New Zealand helped both Michelle and Barbara advance their careers. Learn More October 06, 2023 5 MINUTE READ Video Careers Related Content Blog Related Content - CRA Blog Related Content - Careers -

An Advanced Analytics expert’s obvious choice Abhinav Mathur, Senior Consultant, Advanced Analytics, explains how IQVIA became the obvious choice during his job search for an analytical consulting opportunity. Learn More October 06, 2023 6 MINUTE READ Blog Careers Related Content Blog Related Content - AIML Blog Related Content - Careers

An Advanced Analytics expert’s obvious choice Abhinav Mathur, Senior Consultant, Advanced Analytics, explains how IQVIA became the obvious choice during his job search for an analytical consulting opportunity. Learn More October 06, 2023 6 MINUTE READ Blog Careers Related Content Blog Related Content - AIML Blog Related Content - Careers -

From CRA to Clinical Project Management Lucia shares how she used her CRA experience to take on a new role in Clinical Project Management. Learn More October 10, 2023 5 MINUTE READ Blog Careers Related Content Blog Related Content - CRA Blog Related Content - Careers Related Content - Clinical Research

From CRA to Clinical Project Management Lucia shares how she used her CRA experience to take on a new role in Clinical Project Management. Learn More October 10, 2023 5 MINUTE READ Blog Careers Related Content Blog Related Content - CRA Blog Related Content - Careers Related Content - Clinical Research -

7 skills to future proof your career Transformations, disruptors and trends... Don’t steer away from change. Embrace it. Learn how these seven skills can help you consider whether or not you are positioned for a future-ready career. Learn More November 03, 2023 5 MINUTE READ Article Careers Related Content Blog Related Content - Home Related Content - FAQ Related Content - Saved Blog Related Content - Careers

7 skills to future proof your career Transformations, disruptors and trends... Don’t steer away from change. Embrace it. Learn how these seven skills can help you consider whether or not you are positioned for a future-ready career. Learn More November 03, 2023 5 MINUTE READ Article Careers Related Content Blog Related Content - Home Related Content - FAQ Related Content - Saved Blog Related Content - Careers -

IQVIA’s nurses are frontline heroes A team of Nurse Advisors in the U.K. gave more than 20,000 infusions and injections to patients at the height of the pandemic. Learn More October 16, 2023 3 MINUTE READ Article Careers Related Content Blog Blog Related Content - Careers

IQVIA’s nurses are frontline heroes A team of Nurse Advisors in the U.K. gave more than 20,000 infusions and injections to patients at the height of the pandemic. Learn More October 16, 2023 3 MINUTE READ Article Careers Related Content Blog Blog Related Content - Careers -

From CRA to Clinical Operations Lead Santa highlights the limitless clinical career opportunities and benefits of working at a growing, global organization. Learn More October 16, 2023 5 MINUTE READ Blog Careers Related Content Blog Related Content - CRA Blog Related Content - Careers Related Content - Clinical Research

From CRA to Clinical Operations Lead Santa highlights the limitless clinical career opportunities and benefits of working at a growing, global organization. Learn More October 16, 2023 5 MINUTE READ Blog Careers Related Content Blog Related Content - CRA Blog Related Content - Careers Related Content - Clinical Research -

Make an impact during your video interview The key to making an impact during your next video interview is preparation. Check out these tips to master the art of the virtual interview. Learn More October 16, 2023 3 MINUTE READ Article Careers Related Content Blog Related Content - FAQ Related Content - Saved Blog Related Content - Careers

Make an impact during your video interview The key to making an impact during your next video interview is preparation. Check out these tips to master the art of the virtual interview. Learn More October 16, 2023 3 MINUTE READ Article Careers Related Content Blog Related Content - FAQ Related Content - Saved Blog Related Content - Careers -

Tech Careers in the Middle East Our tech colleagues are developing cutting edge digital tools and applications to help hospitals, pharma companies and governments save lives and improve patient outcomes. Learn More October 18, 2023 6 MINUTE READ Video Careers Related Content Blog Related Content - AIML

Tech Careers in the Middle East Our tech colleagues are developing cutting edge digital tools and applications to help hospitals, pharma companies and governments save lives and improve patient outcomes. Learn More October 18, 2023 6 MINUTE READ Video Careers Related Content Blog Related Content - AIML -

How to ace an interview at IQVIA We want your true potential to shine throughout the interview process so we’re sharing advice to prepare you for your upcoming interview. Learn More November 02, 2023 4 MINUTE READ Article Careers Related Content Blog Related Content - FAQ Related Content - Saved Blog Related Content - Careers

How to ace an interview at IQVIA We want your true potential to shine throughout the interview process so we’re sharing advice to prepare you for your upcoming interview. Learn More November 02, 2023 4 MINUTE READ Article Careers Related Content Blog Related Content - FAQ Related Content - Saved Blog Related Content - Careers -

Career growth as a CRA Discover ways to grow and stretch into any of our clinical career opportunities when you join as a CRA. Learn More October 12, 2023 6 MINUTE READ Infographic Careers Related Content Blog Related Content - CRA Blog Related Content - Careers Related Content - Clinical Research

Career growth as a CRA Discover ways to grow and stretch into any of our clinical career opportunities when you join as a CRA. Learn More October 12, 2023 6 MINUTE READ Infographic Careers Related Content Blog Related Content - CRA Blog Related Content - Careers Related Content - Clinical Research -

Olivia Bicknell’s #BraveReturn to IQVIA Olivia Bicknell’s #BraveReturn to IQVIA Learn More October 18, 2023 6 MINUTE READ Article Careers Related Content Blog Related Content - Alumni

Olivia Bicknell’s #BraveReturn to IQVIA Olivia Bicknell’s #BraveReturn to IQVIA Learn More October 18, 2023 6 MINUTE READ Article Careers Related Content Blog Related Content - Alumni -

6 skills to be a successful CRA Learn what six essential skills drive the success of our Clinical Research Associates. Learn More October 12, 2023 7 MINUTE READ Article Careers Related Content Blog Related Content - CRA Blog Related Content - Careers Related Content - Clinical Research

6 skills to be a successful CRA Learn what six essential skills drive the success of our Clinical Research Associates. Learn More October 12, 2023 7 MINUTE READ Article Careers Related Content Blog Related Content - CRA Blog Related Content - Careers Related Content - Clinical Research -

Quick Questions with a Software Engineer Go fly fishing with Michael Amato to get the inside scoop on life as a Software Engineer at IQVIA. Learn More October 17, 2023 3 MINUTE READ Video Careers Related Content Blog Related Content - AIML Blog Related Content - Careers

Quick Questions with a Software Engineer Go fly fishing with Michael Amato to get the inside scoop on life as a Software Engineer at IQVIA. Learn More October 17, 2023 3 MINUTE READ Video Careers Related Content Blog Related Content - AIML Blog Related Content - Careers -

One IQVIA, Multiple Careers Take your career to the next level by unlocking access to our internal AI-powered talent marketplace platform – where you can expand your skills and achieve career aspirations at IQVIA. Learn More October 17, 2023 3 MINUTE READ Blog Careers Related Content Blog Related Content - Company Related Content - Culture Related Content - Benefits Related Content - FAQ Related Content - Saved Blog Related Content - Careers

One IQVIA, Multiple Careers Take your career to the next level by unlocking access to our internal AI-powered talent marketplace platform – where you can expand your skills and achieve career aspirations at IQVIA. Learn More October 17, 2023 3 MINUTE READ Blog Careers Related Content Blog Related Content - Company Related Content - Culture Related Content - Benefits Related Content - FAQ Related Content - Saved Blog Related Content - Careers -

Behind the desk of an AI Engineer Deepak shares what it's like to work in AI at IQVIA, including his career journey, passion for his work and key skills for success. Learn More November 04, 2023 4 MINUTE READ Blog Careers Related Content Blog Related Content - AIML Blog Related Content - Careers

Behind the desk of an AI Engineer Deepak shares what it's like to work in AI at IQVIA, including his career journey, passion for his work and key skills for success. Learn More November 04, 2023 4 MINUTE READ Blog Careers Related Content Blog Related Content - AIML Blog Related Content - Careers -

Build a career in talent acquisition In this video, Senior Vice President, Tim Wesson, talks about Global Talent Acquisition at IQVIA, covering everything from resources to benefits to career growth. Learn More October 17, 2023 4 MINUTE READ Video Careers Related Content Blog Blog Related Content - Careers

Build a career in talent acquisition In this video, Senior Vice President, Tim Wesson, talks about Global Talent Acquisition at IQVIA, covering everything from resources to benefits to career growth. Learn More October 17, 2023 4 MINUTE READ Video Careers Related Content Blog Blog Related Content - Careers -

IQVIA hosts COVID-19 healthcare app challenge IQVIA developers create apps to support COVID-19 relief and respond to underserved communities. Learn More October 17, 2023 3 MINUTE READ Article Careers Related Content Blog Blog Related Content - Careers

IQVIA hosts COVID-19 healthcare app challenge IQVIA developers create apps to support COVID-19 relief and respond to underserved communities. Learn More October 17, 2023 3 MINUTE READ Article Careers Related Content Blog Blog Related Content - Careers -

CenterWatch names IQVIA a top CRO IQVIA named a top CRO in Overall Reputation in the 2021 WCG CenterWatch Global Site Relationship Benchmark Survey Report. Learn More October 12, 2023 2 MINUTE READ Article Careers Related Content Blog Blog Related Content - Careers

CenterWatch names IQVIA a top CRO IQVIA named a top CRO in Overall Reputation in the 2021 WCG CenterWatch Global Site Relationship Benchmark Survey Report. Learn More October 12, 2023 2 MINUTE READ Article Careers Related Content Blog Blog Related Content - Careers -

Detra makes her #ReturnToIQVIA Clinical Project Manager, Detra Mason, shares what she’s always valued about working at IQVIA and the positive experiences that encouraged her #ReturnToIQVIA Learn More October 17, 2023 4 MINUTE READ Article Careers Related Content Blog Related Content - Alumni Blog Related Content - Careers Related Content - Clinical Research

Detra makes her #ReturnToIQVIA Clinical Project Manager, Detra Mason, shares what she’s always valued about working at IQVIA and the positive experiences that encouraged her #ReturnToIQVIA Learn More October 17, 2023 4 MINUTE READ Article Careers Related Content Blog Related Content - Alumni Blog Related Content - Careers Related Content - Clinical Research -

How embracing collaboration supported Bhavani’s career journey Bhavani Sivalingam, SVP of Research and Development Solutions, JAPAC, attributes some of her proudest career moments and lessons learned to the power of teamwork and leaning into your network. Learn More October 18, 2023 6 MINUTE READ Blog Careers Related Content Blog Related Content - CRA Blog Related Content - Careers Related Content - Clinical Research

How embracing collaboration supported Bhavani’s career journey Bhavani Sivalingam, SVP of Research and Development Solutions, JAPAC, attributes some of her proudest career moments and lessons learned to the power of teamwork and leaning into your network. Learn More October 18, 2023 6 MINUTE READ Blog Careers Related Content Blog Related Content - CRA Blog Related Content - Careers Related Content - Clinical Research -

5 skills to be a successful consultant: #SuccessFactors Want to accelerate your growth while working on impactful global projects in the life sciences sector? Then a consultant role at IQVIA could be the right fit for you. Here are five key traits you will need to bring to the table. Learn More October 19, 2023 6 MINUTE READ Article Careers Related Content Blog Blog Related Content - Careers

5 skills to be a successful consultant: #SuccessFactors Want to accelerate your growth while working on impactful global projects in the life sciences sector? Then a consultant role at IQVIA could be the right fit for you. Here are five key traits you will need to bring to the table. Learn More October 19, 2023 6 MINUTE READ Article Careers Related Content Blog Blog Related Content - Careers -

Onboarding at IQVIA: Inspire, connect, enable Interested in joining IQVIA? Here’s what you can expect during your onboarding journey at IQVIA. Learn More October 05, 2023 5 MINUTE READ Blog Careers Related Content Blog Related Content - Benefits Related Content - FAQ Related Content - Saved Blog Related Content - Careers

Onboarding at IQVIA: Inspire, connect, enable Interested in joining IQVIA? Here’s what you can expect during your onboarding journey at IQVIA. Learn More October 05, 2023 5 MINUTE READ Blog Careers Related Content Blog Related Content - Benefits Related Content - FAQ Related Content - Saved Blog Related Content - Careers -

Career Restart – Women’s returners program IQVIA’s Careers Restart program in India focuses on hiring women who have taken a break from their career and are ready to return to the workforce. Learn More October 19, 2023 3 MINUTE READ Blog Careers Related Content Blog Related Content - Home Related Content - Culture Related Content - Diversity Related Content - Alumni Blog Related Content - Careers

Career Restart – Women’s returners program IQVIA’s Careers Restart program in India focuses on hiring women who have taken a break from their career and are ready to return to the workforce. Learn More October 19, 2023 3 MINUTE READ Blog Careers Related Content Blog Related Content - Home Related Content - Culture Related Content - Diversity Related Content - Alumni Blog Related Content - Careers -

Always growing: Journey from Intern to Statistical Programming Manager Brought to IQVIA through a week-long work experience program, this Statistical Programmer's career journey exemplifies IQVIA's commitment to continuous growth. Learn More October 18, 2023 6 MINUTE READ Blog Careers Related Content Blog Blog Related Content - Careers Blog Related Content - Interns Related Content - Clinical Research

Always growing: Journey from Intern to Statistical Programming Manager Brought to IQVIA through a week-long work experience program, this Statistical Programmer's career journey exemplifies IQVIA's commitment to continuous growth. Learn More October 18, 2023 6 MINUTE READ Blog Careers Related Content Blog Blog Related Content - Careers Blog Related Content - Interns Related Content - Clinical Research -

5 ways to grow your career Fulfill your career aspirations at IQVIA by unlocking access to these five development resources. Learn More October 19, 2023 4 MINUTE READ Article Careers Related Content Blog Related Content - Home Related Content - Company Related Content - Culture Related Content - Benefits Related Content - FAQ Related Content - Saved Blog Related Content - Careers

5 ways to grow your career Fulfill your career aspirations at IQVIA by unlocking access to these five development resources. Learn More October 19, 2023 4 MINUTE READ Article Careers Related Content Blog Related Content - Home Related Content - Company Related Content - Culture Related Content - Benefits Related Content - FAQ Related Content - Saved Blog Related Content - Careers -

IQVIANs support health activists in Bangalore Employees from IQVIA’s Q2 Solutions team distribute donations to frontline health activists in Bangalore. Learn More October 16, 2023 2 MINUTE READ Article Community Related Content Blog Blog Related Content - Community

IQVIANs support health activists in Bangalore Employees from IQVIA’s Q2 Solutions team distribute donations to frontline health activists in Bangalore. Learn More October 16, 2023 2 MINUTE READ Article Community Related Content Blog Blog Related Content - Community -

Bulgarian team helps keep park litter-free With a passion for nature, one IQVIA team volunteered to help keep a national park litter-free. Learn More October 12, 2023 2 MINUTE READ Blog Community Related Content Blog Related Content - Culture Related Content - Benefits Blog Related Content - Community

Bulgarian team helps keep park litter-free With a passion for nature, one IQVIA team volunteered to help keep a national park litter-free. Learn More October 12, 2023 2 MINUTE READ Blog Community Related Content Blog Related Content - Culture Related Content - Benefits Blog Related Content - Community -

Alumni Spotlight: IQVIA career leads to non-profit IQVIA Alumni Network member, Shekar Hariharan, shares how his experience at IQVIA positioned him to follow his passion for philanthropy and start a non-profit. Learn More October 17, 2023 3 MINUTE READ Blog Community Related Content Blog Related Content - Alumni Blog Related Content - Community

Alumni Spotlight: IQVIA career leads to non-profit IQVIA Alumni Network member, Shekar Hariharan, shares how his experience at IQVIA positioned him to follow his passion for philanthropy and start a non-profit. Learn More October 17, 2023 3 MINUTE READ Blog Community Related Content Blog Related Content - Alumni Blog Related Content - Community -

IQVIAN ranks second place in World Transplant Games 5K LaTonya Jones shares how her work at IQVIA and personal transplant journey inspired her recent accomplishment at the World Transplant Games 5K. Learn More October 20, 2023 3 MINUTE READ Blog Community Related Content Blog Blog Related Content - Community

IQVIAN ranks second place in World Transplant Games 5K LaTonya Jones shares how her work at IQVIA and personal transplant journey inspired her recent accomplishment at the World Transplant Games 5K. Learn More October 20, 2023 3 MINUTE READ Blog Community Related Content Blog Blog Related Content - Community -

Alumni Spotlight: Doing the math and making it count Alumna, Karrie Liu, a mathematics guru, turned her passion for numbers into a career in real world healthcare data and analytics. Learn More October 17, 2023 4 MINUTE READ Blog Community Related Content Blog Related Content - Alumni Blog Related Content - Community

Alumni Spotlight: Doing the math and making it count Alumna, Karrie Liu, a mathematics guru, turned her passion for numbers into a career in real world healthcare data and analytics. Learn More October 17, 2023 4 MINUTE READ Blog Community Related Content Blog Related Content - Alumni Blog Related Content - Community -

Germany employees kayak to fight environmental pollution IQVIANs use their IQVIA Day to paddle across Berlin to fish plastic and other garbage from the waters. Learn More October 19, 2023 1 MINUTE READ Article Community Related Content Blog Related Content - Culture Related Content - Benefits Blog Related Content - Community

Germany employees kayak to fight environmental pollution IQVIANs use their IQVIA Day to paddle across Berlin to fish plastic and other garbage from the waters. Learn More October 19, 2023 1 MINUTE READ Article Community Related Content Blog Related Content - Culture Related Content - Benefits Blog Related Content - Community -

Celebrating our people: India Appreciation Week To recognize the more than 20,000 employees based in India, the local team held their first India Appreciation Week. Learn More October 20, 2023 6 MINUTE READ Blog Community Related Content Blog Blog Related Content - Community

Celebrating our people: India Appreciation Week To recognize the more than 20,000 employees based in India, the local team held their first India Appreciation Week. Learn More October 20, 2023 6 MINUTE READ Blog Community Related Content Blog Blog Related Content - Community -

IQVIA Italia hosts ‘We (will) Walk (you)’ challenge IQVIANs in Italy help create sustainable behaviors by competing in a walking challenge, with steps exceeding the Earth’s circumference. Learn More October 20, 2023 2 MINUTE READ Blog Community Related Content Blog Related Content - Culture Related Content - Benefits Blog Related Content - Community

IQVIA Italia hosts ‘We (will) Walk (you)’ challenge IQVIANs in Italy help create sustainable behaviors by competing in a walking challenge, with steps exceeding the Earth’s circumference. Learn More October 20, 2023 2 MINUTE READ Blog Community Related Content Blog Related Content - Culture Related Content - Benefits Blog Related Content - Community -

A triathlete’s take on IQVIA work-life balance Talent acquisition lead, Luke Davison, describes how IQVIA provides him with the work-life balance he needs to make time for his passion for swimming, cycling and running. Learn More October 12, 2023 4 MINUTE READ Blog Culture Related Content Blog Related Content - Culture Related Content - Benefits Blog Related Content - Culture

A triathlete’s take on IQVIA work-life balance Talent acquisition lead, Luke Davison, describes how IQVIA provides him with the work-life balance he needs to make time for his passion for swimming, cycling and running. Learn More October 12, 2023 4 MINUTE READ Blog Culture Related Content Blog Related Content - Culture Related Content - Benefits Blog Related Content - Culture -

Life-changing medication reminds mother of IQVIA’s purpose Celina Buelga is intimately familiar with the impact of IQVIA’s work and the power clinical research has on patient lives. Learn More October 16, 2023 6 MINUTE READ Blog Culture Related Content Blog Related Content - Company Blog Related Content - Culture

Life-changing medication reminds mother of IQVIA’s purpose Celina Buelga is intimately familiar with the impact of IQVIA’s work and the power clinical research has on patient lives. Learn More October 16, 2023 6 MINUTE READ Blog Culture Related Content Blog Related Content - Company Blog Related Content - Culture -

The best things about IQVIA Global Trials Manager, Ola Balacka, is letting you in on a secret: the top three things she likes best about working at IQVIA. Learn More October 18, 2023 5 MINUTE READ Blog Culture Related Content Blog Blog Related Content - Culture

The best things about IQVIA Global Trials Manager, Ola Balacka, is letting you in on a secret: the top three things she likes best about working at IQVIA. Learn More October 18, 2023 5 MINUTE READ Blog Culture Related Content Blog Blog Related Content - Culture -

IQVIA wins four learning excellence awards 2022 Brandon Hall Human Capital Management Excellence Awards recognize IQVIA in multiple categories. Learn More October 20, 2023 4 MINUTE READ Blog Culture Related Content Blog Blog Related Content - Culture

IQVIA wins four learning excellence awards 2022 Brandon Hall Human Capital Management Excellence Awards recognize IQVIA in multiple categories. Learn More October 20, 2023 4 MINUTE READ Blog Culture Related Content Blog Blog Related Content - Culture -

Tips to maintain a healthy work experience With back-to-back meetings, a lengthy task list or being stuck behind a desk, it can be challenging to maintain a healthy work experience. Here are nine valuable tips for you to try while on the job. Learn More October 12, 2023 3 MINUTE READ Article Culture Related Content Blog Related Content - Culture Related Content - Benefits Related Content - FAQ Related Content - Saved Blog Related Content - Culture

Tips to maintain a healthy work experience With back-to-back meetings, a lengthy task list or being stuck behind a desk, it can be challenging to maintain a healthy work experience. Here are nine valuable tips for you to try while on the job. Learn More October 12, 2023 3 MINUTE READ Article Culture Related Content Blog Related Content - Culture Related Content - Benefits Related Content - FAQ Related Content - Saved Blog Related Content - Culture -

IQVIANs awarded 2022 HBA Luminary and Rising Star Susan Lipsitz has been named IQVIA’s Healthcare Businesswomen’s Association 2022 Luminary, and Sue Bailey as IQVIA’s HBA 2022 Rising Star. Learn More December 30, 2022 3 MINUTE READ Article Culture Related Content Blog Related Content - Diversity Blog Related Content - Culture

IQVIANs awarded 2022 HBA Luminary and Rising Star Susan Lipsitz has been named IQVIA’s Healthcare Businesswomen’s Association 2022 Luminary, and Sue Bailey as IQVIA’s HBA 2022 Rising Star. Learn More December 30, 2022 3 MINUTE READ Article Culture Related Content Blog Related Content - Diversity Blog Related Content - Culture -

IQVIANs participate in STEPtember fitness challenge Australia and New Zealand colleagues give back while boosting their health and wellness in a STEPtember fitness challenge. Learn More October 16, 2023 2 MINUTE READ Article Culture Related Content Blog Blog Related Content - Culture

IQVIANs participate in STEPtember fitness challenge Australia and New Zealand colleagues give back while boosting their health and wellness in a STEPtember fitness challenge. Learn More October 16, 2023 2 MINUTE READ Article Culture Related Content Blog Blog Related Content - Culture -

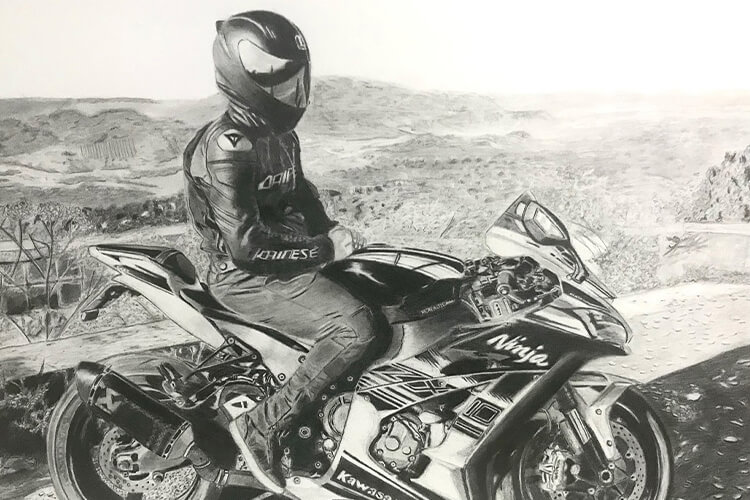

IT by day. Artist by night. Meet Paulo. While his days are spent supporting IQVIA with data, software and equipment requests, his passion for drawing comes out at night. Learn More October 16, 2023 1 MINUTE READ Article Culture Related Content Blog Related Content - AIML Blog Related Content - Culture

IT by day. Artist by night. Meet Paulo. While his days are spent supporting IQVIA with data, software and equipment requests, his passion for drawing comes out at night. Learn More October 16, 2023 1 MINUTE READ Article Culture Related Content Blog Related Content - AIML Blog Related Content - Culture -

‘Most Admired’ for our passion in driving healthcare forward Executive Vice President and Chief Human Resources Officer, Trudy Stein, discusses what it means to be named to FORTUNE’s 2023 List of “World’s Most Admired Companies.” Learn More October 11, 2023 7 MINUTE READ Article Culture Related Content Blog Related Content - Home Related Content - Company Related Content - Culture Related Content - CRA Related Content - Saved Blog Related Content - Culture

‘Most Admired’ for our passion in driving healthcare forward Executive Vice President and Chief Human Resources Officer, Trudy Stein, discusses what it means to be named to FORTUNE’s 2023 List of “World’s Most Admired Companies.” Learn More October 11, 2023 7 MINUTE READ Article Culture Related Content Blog Related Content - Home Related Content - Company Related Content - Culture Related Content - CRA Related Content - Saved Blog Related Content - Culture -

Passion for Public Health When asked about his work at IQVIA, Deepak Batra was quick to share his passion for improving patient health and the positive impact he makes every day as a Senior Principal of Global Public Health. Learn More October 18, 2023 4 MINUTE READ Blog Culture Related Content Blog Related Content - Home Related Content - Company Related Content - Culture Blog Related Content - Culture

Passion for Public Health When asked about his work at IQVIA, Deepak Batra was quick to share his passion for improving patient health and the positive impact he makes every day as a Senior Principal of Global Public Health. Learn More October 18, 2023 4 MINUTE READ Blog Culture Related Content Blog Related Content - Home Related Content - Company Related Content - Culture Blog Related Content - Culture -

Excellence in video production IQVIA honored with Telly Awards for Employee Communications and Recruitment videos. Learn More October 18, 2023 1 MINUTE READ Blog Culture Related Content Blog Related Content - Company Blog Related Content - Culture

Excellence in video production IQVIA honored with Telly Awards for Employee Communications and Recruitment videos. Learn More October 18, 2023 1 MINUTE READ Blog Culture Related Content Blog Related Content - Company Blog Related Content - Culture -

How we work We understand life’s complexities so no matter the role, we strive to find the balance of work flexibility so you can succeed both professionally and personally. Learn More November 07, 2023 4 MINUTE READ Article Culture Related Content Blog Related Content - Home Related Content - Company Related Content - Culture Related Content - Benefits Related Content - FAQ Related Content - Saved Blog Related Content - Culture

How we work We understand life’s complexities so no matter the role, we strive to find the balance of work flexibility so you can succeed both professionally and personally. Learn More November 07, 2023 4 MINUTE READ Article Culture Related Content Blog Related Content - Home Related Content - Company Related Content - Culture Related Content - Benefits Related Content - FAQ Related Content - Saved Blog Related Content - Culture -

A day in the life as a Senior AI Engineer Watch as Rehan Ali gives you a look into a day in his life as a Senior Artificial Intelligence Engineer at IQVIA. Learn More October 10, 2023 2 MINUTE READ Video Careers Related Content Blog Blog - Featured Related Content - AIML Blog Related Content - Careers

A day in the life as a Senior AI Engineer Watch as Rehan Ali gives you a look into a day in his life as a Senior Artificial Intelligence Engineer at IQVIA. Learn More October 10, 2023 2 MINUTE READ Video Careers Related Content Blog Blog - Featured Related Content - AIML Blog Related Content - Careers -

The 3 best things about IQVIA Watch to find out what our leaders are saying about our people, the breadth and depth of our capabilities, and IQVIA’s commitment to a growth mindset. Learn More October 17, 2023 5 MINUTE READ Video Culture Related Content Blog Blog - Featured Related Content - Home Related Content - Company Related Content - Culture Related Content - Saved Blog Related Content - Careers

The 3 best things about IQVIA Watch to find out what our leaders are saying about our people, the breadth and depth of our capabilities, and IQVIA’s commitment to a growth mindset. Learn More October 17, 2023 5 MINUTE READ Video Culture Related Content Blog Blog - Featured Related Content - Home Related Content - Company Related Content - Culture Related Content - Saved Blog Related Content - Careers -

Why IQVIA? Reflections during Pride Andrew Barnhill, J.D., head of policy, Global Legal, reflects on his career journey through the lens of Pride month. Learn More June 01, 2022 3 MINUTE READ Blog Diversity & Inclusion Related Content Blog Related Content - Diversity Blog Related Content - Diversity & Inclusion

Why IQVIA? Reflections during Pride Andrew Barnhill, J.D., head of policy, Global Legal, reflects on his career journey through the lens of Pride month. Learn More June 01, 2022 3 MINUTE READ Blog Diversity & Inclusion Related Content Blog Related Content - Diversity Blog Related Content - Diversity & Inclusion -

REACH volunteers at America's Grow-a-Row IQVIA’s Race, Ethnicity, and Cultural Heritage Employee Resource Group supports their community and highlights health inequalities, partnering with America’s Grow-a-Row. Learn More October 09, 2023 1 MINUTE READ Article Diversity & Inclusion Blog Related Content - Culture Related Content - Benefits Related Content - Diversity Blog Related Content - Diversity & Inclusion

REACH volunteers at America's Grow-a-Row IQVIA’s Race, Ethnicity, and Cultural Heritage Employee Resource Group supports their community and highlights health inequalities, partnering with America’s Grow-a-Row. Learn More October 09, 2023 1 MINUTE READ Article Diversity & Inclusion Blog Related Content - Culture Related Content - Benefits Related Content - Diversity Blog Related Content - Diversity & Inclusion -

IQVIA’s 2021 HBA Award Recipients IQVIANs recognized as 2021 Luminary and Rising Star by the Healthcare Businesswomen’s Association. Learn More December 30, 2021 4 MINUTE READ Article Diversity & Inclusion Related Content Blog Blog Related Content - Diversity & Inclusion

IQVIA’s 2021 HBA Award Recipients IQVIANs recognized as 2021 Luminary and Rising Star by the Healthcare Businesswomen’s Association. Learn More December 30, 2021 4 MINUTE READ Article Diversity & Inclusion Related Content Blog Blog Related Content - Diversity & Inclusion -

Celebrating International Women’s Day IQVIA is challenging stereotypes and celebrating the 60% of women who make up our diverse, global workforce. Hear how we are breaking the bias. Learn More March 01, 2022 2 MINUTE READ Blog Diversity & Inclusion Related Content Blog Related Content - Diversity Blog Related Content - Diversity & Inclusion

Celebrating International Women’s Day IQVIA is challenging stereotypes and celebrating the 60% of women who make up our diverse, global workforce. Hear how we are breaking the bias. Learn More March 01, 2022 2 MINUTE READ Blog Diversity & Inclusion Related Content Blog Related Content - Diversity Blog Related Content - Diversity & Inclusion -

Mentoring students to inspire career decisions IQVIANs volunteered their time to help young students from disadvantaged backgrounds build their future and kick-start their career paths. Learn More October 06, 2023 3 MINUTE READ Article Diversity & Inclusion Related Content Blog Related Content - Culture Related Content - Diversity Blog Related Content - Diversity & Inclusion

Mentoring students to inspire career decisions IQVIANs volunteered their time to help young students from disadvantaged backgrounds build their future and kick-start their career paths. Learn More October 06, 2023 3 MINUTE READ Article Diversity & Inclusion Related Content Blog Related Content - Culture Related Content - Diversity Blog Related Content - Diversity & Inclusion -

IQVIA celebrates World Day for Cultural Diversity REACH members learn how different cultures influence how people communicate. Learn More May 01, 2022 3 MINUTE READ Article Diversity & Inclusion Related Content Blog Related Content - Diversity Blog Related Content - Diversity & Inclusion

IQVIA celebrates World Day for Cultural Diversity REACH members learn how different cultures influence how people communicate. Learn More May 01, 2022 3 MINUTE READ Article Diversity & Inclusion Related Content Blog Related Content - Diversity Blog Related Content - Diversity & Inclusion -

What is an Employee Resource Group? IQVIA’s Employee Resource Groups provide colleagues with unique spaces to expand their community experience, collaborate in new ways and create avenues for personal development. Learn More October 12, 2023 2 MINUTE READ Blog Diversity & Inclusion Related Content Blog Related Content - Diversity Related Content - FAQ Blog Related Content - Diversity & Inclusion

What is an Employee Resource Group? IQVIA’s Employee Resource Groups provide colleagues with unique spaces to expand their community experience, collaborate in new ways and create avenues for personal development. Learn More October 12, 2023 2 MINUTE READ Blog Diversity & Inclusion Related Content Blog Related Content - Diversity Related Content - FAQ Blog Related Content - Diversity & Inclusion -

Celebrating Pride Month 2022 This Pride month we come together to accept, celebrate and raise awareness. Learn More June 01, 2022 2 MINUTE READ Video Diversity & Inclusion Related Content Blog Related Content - Diversity Blog Related Content - Diversity & Inclusion

Celebrating Pride Month 2022 This Pride month we come together to accept, celebrate and raise awareness. Learn More June 01, 2022 2 MINUTE READ Video Diversity & Inclusion Related Content Blog Related Content - Diversity Blog Related Content - Diversity & Inclusion -

Your guide to IQVIA internships Get answers to some of the most commonly asked internship questions. Learn More November 05, 2023 7 MINUTE READ Article Interns Related Content Blog Blog Related Content - Interns

Your guide to IQVIA internships Get answers to some of the most commonly asked internship questions. Learn More November 05, 2023 7 MINUTE READ Article Interns Related Content Blog Blog Related Content - Interns -

Our North America intern program What does an intern at IQVIA do? Hear from our Summer 2021 interns in North America to get an inside look. Learn More October 18, 2023 3 MINUTE READ Video Interns Related Content Blog Blog Related Content - Interns

Our North America intern program What does an intern at IQVIA do? Hear from our Summer 2021 interns in North America to get an inside look. Learn More October 18, 2023 3 MINUTE READ Video Interns Related Content Blog Blog Related Content - Interns -

Inaugural Project Leadership internship program Project Management Analysts share personal insights about the value of their IQVIA internships. Learn More October 20, 2023 5 MINUTE READ Blog Interns Related Content Blog Blog Related Content - Interns

Inaugural Project Leadership internship program Project Management Analysts share personal insights about the value of their IQVIA internships. Learn More October 20, 2023 5 MINUTE READ Blog Interns Related Content Blog Blog Related Content - Interns -

The internship experience Three former interns give a peek into their experience during IQVIA's 10-week internship program. Learn More October 12, 2023 2 MINUTE READ Video Interns Related Content Blog Blog Related Content - Interns

The internship experience Three former interns give a peek into their experience during IQVIA's 10-week internship program. Learn More October 12, 2023 2 MINUTE READ Video Interns Related Content Blog Blog Related Content - Interns -

An internship at IQVIA India Discover a career with greater purpose as an intern at IQVIA India. Here, you’ll gain on-the-job experience, partner with industry experts, and be empowered to learn and grow with us. Learn More October 20, 2023 3 MINUTE READ Blog Interns Related Content Blog - Featured Blog Related Content - Interns

An internship at IQVIA India Discover a career with greater purpose as an intern at IQVIA India. Here, you’ll gain on-the-job experience, partner with industry experts, and be empowered to learn and grow with us. Learn More October 20, 2023 3 MINUTE READ Blog Interns Related Content Blog - Featured Blog Related Content - Interns -

European Thought Leadership internship program European Thought Leadership interns, Riming and Bayley, reflect on their time at IQVIA, sharing their first impressions and most memorable moments. Learn More October 24, 2023 5 MINUTE READ Interview Interns Related Content Blog Blog Related Content - Interns

European Thought Leadership internship program European Thought Leadership interns, Riming and Bayley, reflect on their time at IQVIA, sharing their first impressions and most memorable moments. Learn More October 24, 2023 5 MINUTE READ Interview Interns Related Content Blog Blog Related Content - Interns -

5 skills for IT Design & Development Learn what five skills drive the success of our technology experts. Learn More October 17, 2023 6 MINUTE READ Article Careers Related Content Blog Related Content - AIML Blog Related Content - Careers

5 skills for IT Design & Development Learn what five skills drive the success of our technology experts. Learn More October 17, 2023 6 MINUTE READ Article Careers Related Content Blog Related Content - AIML Blog Related Content - Careers -

Achieving work-life balance at IQVIA Find out what employees are saying about achieving work-life balance at IQVIA and having time for activities outside of work. Learn More October 06, 2023 4 MINUTE READ Blog Culture Related Content Blog Related Content - Home Related Content - Culture Related Content - Benefits Related Content - Saved Blog Related Content - Culture

Achieving work-life balance at IQVIA Find out what employees are saying about achieving work-life balance at IQVIA and having time for activities outside of work. Learn More October 06, 2023 4 MINUTE READ Blog Culture Related Content Blog Related Content - Home Related Content - Culture Related Content - Benefits Related Content - Saved Blog Related Content - Culture -

No stone left unturned: A glimpse at Wendy’s career journey President of Clinical Operations, R&DS, shares pivotal career moments and transformative experiences as well as how the power of career exploration has shaped her into the leader she is today. Learn More November 06, 2023 6 MINUTE READ Blog Careers Related Content Blog Related Content - CRA Blog Related Content - Careers Related Content - Clinical Research

No stone left unturned: A glimpse at Wendy’s career journey President of Clinical Operations, R&DS, shares pivotal career moments and transformative experiences as well as how the power of career exploration has shaped her into the leader she is today. Learn More November 06, 2023 6 MINUTE READ Blog Careers Related Content Blog Related Content - CRA Blog Related Content - Careers Related Content - Clinical Research -

A day in the life as a Clinical Operations Manager Join Candice Bond as she takes you through a day in her life as a Clinical Operations Manager at IQVIA. Learn More January 23, 2024 2 Minute Read Video Careers Related Content Blog Blog Related Content - Careers Related Content - Clinical Research

A day in the life as a Clinical Operations Manager Join Candice Bond as she takes you through a day in her life as a Clinical Operations Manager at IQVIA. Learn More January 23, 2024 2 Minute Read Video Careers Related Content Blog Blog Related Content - Careers Related Content - Clinical Research -

Quick Questions with a Clinical Research Associate Play a game of pickleball with Nisha Shah to get a glimpse into work and life as a CRA at IQVIA. Learn More January 30, 2024 3 minute read Blog Careers Related Content Blog - Featured Related Content - CRA Blog Related Content - Careers Related Content - Clinical Research

Quick Questions with a Clinical Research Associate Play a game of pickleball with Nisha Shah to get a glimpse into work and life as a CRA at IQVIA. Learn More January 30, 2024 3 minute read Blog Careers Related Content Blog - Featured Related Content - CRA Blog Related Content - Careers Related Content - Clinical Research -

Gurjeet returns to IQVIA Gurjeet Chana, senior clinical research associate, reflects on what drew her back and her experience since rejoining the IQVIA team. Learn More October 11, 2023 4 MINUTE READ Article Careers Related Content Blog Related Content - Alumni Blog Related Content - Careers Related Content - Clinical Research

Gurjeet returns to IQVIA Gurjeet Chana, senior clinical research associate, reflects on what drew her back and her experience since rejoining the IQVIA team. Learn More October 11, 2023 4 MINUTE READ Article Careers Related Content Blog Related Content - Alumni Blog Related Content - Careers Related Content - Clinical Research -

Well-being at IQVIA – For a healthy you We know our vision of powering smarter healthcare for everyone, everywhere starts with our efforts to support the well-being of our employees and their families. Learn More February 28, 2024 4 Minute Read Article Culture Blog - Featured Related Content - Culture Related Content - Benefits Blog Related Content - Culture

Well-being at IQVIA – For a healthy you We know our vision of powering smarter healthcare for everyone, everywhere starts with our efforts to support the well-being of our employees and their families. Learn More February 28, 2024 4 Minute Read Article Culture Blog - Featured Related Content - Culture Related Content - Benefits Blog Related Content - Culture -

What does it mean to be an IQVIAN? Hear from colleagues as they describe our culture – our passion, the people, curiosity to innovate, and opportunities to learn every day. Learn More March 11, 2024 2 Minute Read Blog Culture Blog - Featured Related Content - Company Related Content - Culture Blog Related Content - Culture

What does it mean to be an IQVIAN? Hear from colleagues as they describe our culture – our passion, the people, curiosity to innovate, and opportunities to learn every day. Learn More March 11, 2024 2 Minute Read Blog Culture Blog - Featured Related Content - Company Related Content - Culture Blog Related Content - Culture -

IQVIANs volunteer at local food bank Watch as IQVIANs spend their paid volunteer day packaging donated food for community members in need. Learn More March 01, 2024 5 Minute Read Video Community Blog Blog Related Content - Community

IQVIANs volunteer at local food bank Watch as IQVIANs spend their paid volunteer day packaging donated food for community members in need. Learn More March 01, 2024 5 Minute Read Video Community Blog Blog Related Content - Community -

Quick Questions with a Clinical Research Associate in Amsterdam Stroll through IQVIA’s Amsterdam, Netherlands office with Iza Cools as she shares her personal experience on what it is like to work as a CRA at IQVIA. Learn More March 19, 2024 3 Minute Read Video Careers Blog - Featured Related Content - CRA Blog Related Content - Careers Related Content - Clinical Research

Quick Questions with a Clinical Research Associate in Amsterdam Stroll through IQVIA’s Amsterdam, Netherlands office with Iza Cools as she shares her personal experience on what it is like to work as a CRA at IQVIA. Learn More March 19, 2024 3 Minute Read Video Careers Blog - Featured Related Content - CRA Blog Related Content - Careers Related Content - Clinical Research -

Learn More March 13, 2024

Learn More March 13, 2024 -

IQVIA’s evolution: From past to present Discover the historical milestones which led to the creation a new kind of company, IQVIA. Learn More March 28, 2024 5 Minute Read Article Culture Blog - Featured Related Content - Company Related Content - Culture Blog Related Content - Culture

IQVIA’s evolution: From past to present Discover the historical milestones which led to the creation a new kind of company, IQVIA. Learn More March 28, 2024 5 Minute Read Article Culture Blog - Featured Related Content - Company Related Content - Culture Blog Related Content - Culture -

5-steps to crafting an impactful resume for a Clinical Research Associate Looking to master the art of writing a compelling CRA resume? We’re mapping the five steps you’ll want to take so you’re ready for the next CRA role at IQVIA. Learn More April 10, 2024 6 Minute Read Article Careers Blog - Featured Related Content - CRA Blog Related Content - Careers Related Content - Clinical Research

5-steps to crafting an impactful resume for a Clinical Research Associate Looking to master the art of writing a compelling CRA resume? We’re mapping the five steps you’ll want to take so you’re ready for the next CRA role at IQVIA. Learn More April 10, 2024 6 Minute Read Article Careers Blog - Featured Related Content - CRA Blog Related Content - Careers Related Content - Clinical Research